There has been a quiet revolution taking place over the last few years. AI (Artificial Intelligence) has always been a goal in the IT world – a utopia in which we are watched over by machines of loving grace. Perhaps I’m late to the party, but in 2022 I feel it has really taken giant leaps forward in terms of practical application.

There has been a quiet revolution taking place over the last few years. AI (Artificial Intelligence) has always been a goal in the IT world – a utopia in which we are watched over by machines of loving grace. Perhaps I’m late to the party, but in 2022 I feel it has really taken giant leaps forward in terms of practical application.

By now you’ve probably heard of DALL-E, OpenAI’s tool for generating “original” artworks based on natural language input (used to put together the cover art for this post, btw), and over the last few days I have been playing around with their Chat GPT tool.

Chat GPT – which is kind of an Eliza bot combined with a search engine (although this rather flippant description is in no way doing the tool justice) – allows for natural language conversations, to which the bot will answer your queries with “original” content… and it is, as Elon Musk puts it… “Scarily good”.

In the AI’s own words: I am an artificial intelligence trained to assist with a wide range of tasks, including answering questions and providing information on a variety of topics.

You can still tell it’s a machine (for now), but that’s not the point. It is not an attempt to pass a Turing test, it is a learning intelligence and automation tool, which is generationally more advanced and flexible than Siri or Alexa.

You’ll also note I put the word “original” in scare quotes – these models are trained on data that’s out there on the internet, so true creativity is currently beyond them. They can remix, and reuse, but not necessarily create, at least not yet… although you could argue that this all humans do anyway. As an aside, I wonder whether having access to a large body of software code for an AI to digest played any part in Microsoft’s decision to buy GitHub a few years ago?

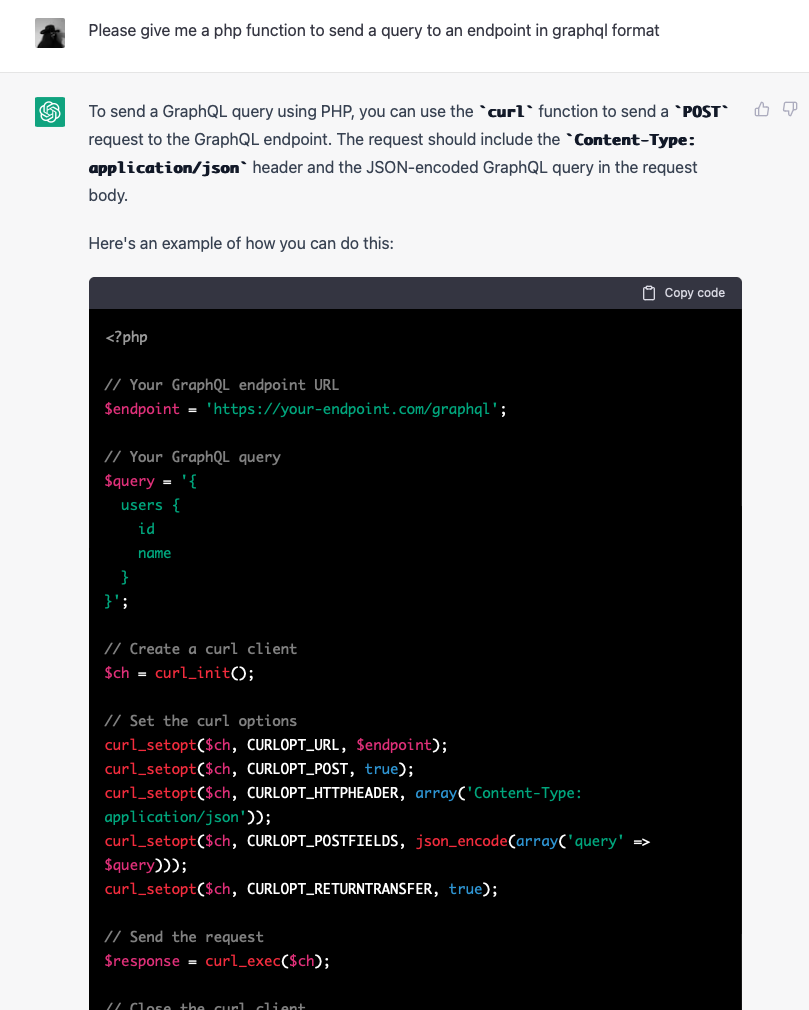

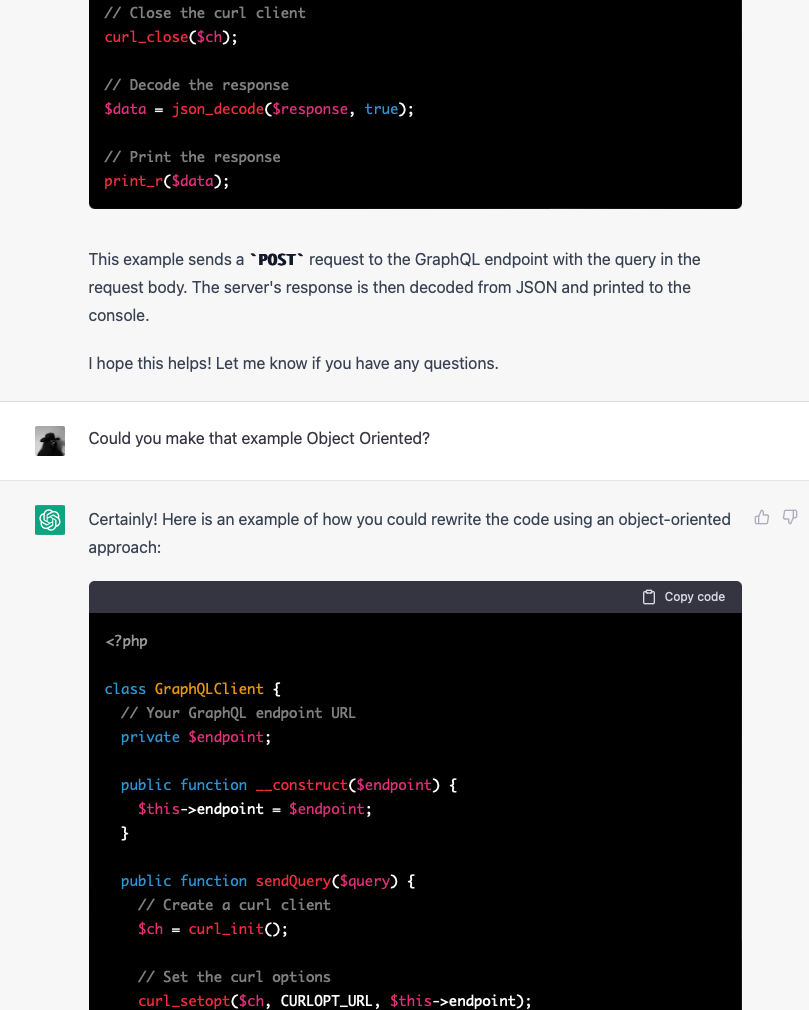

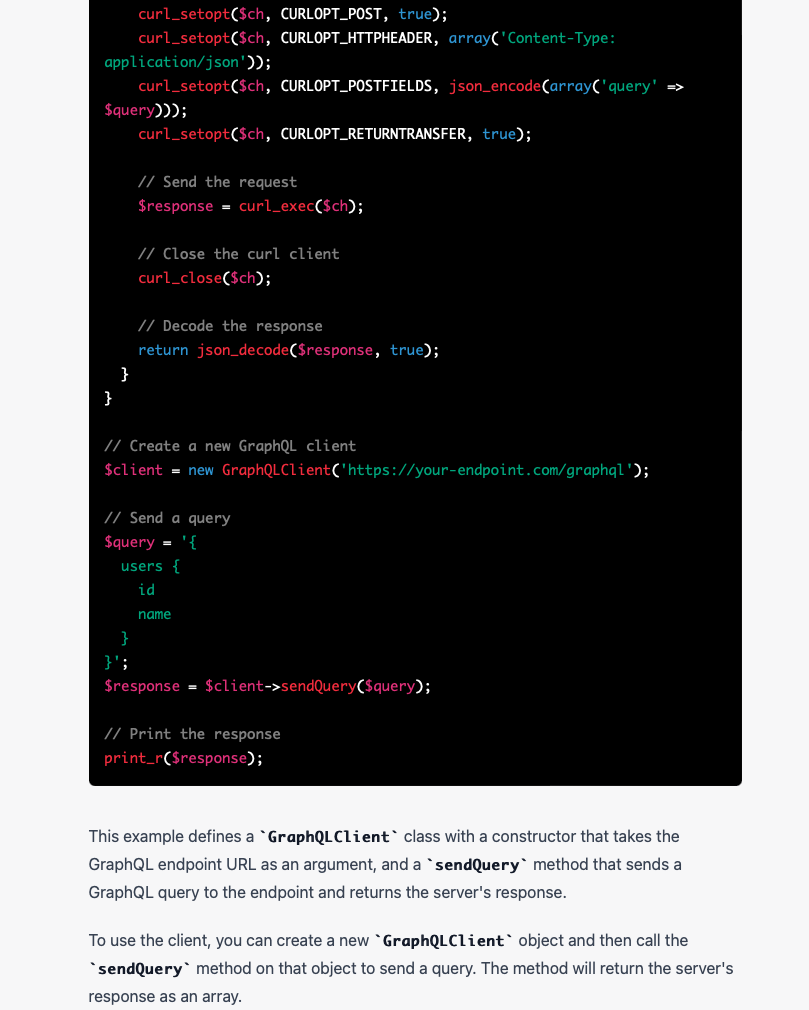

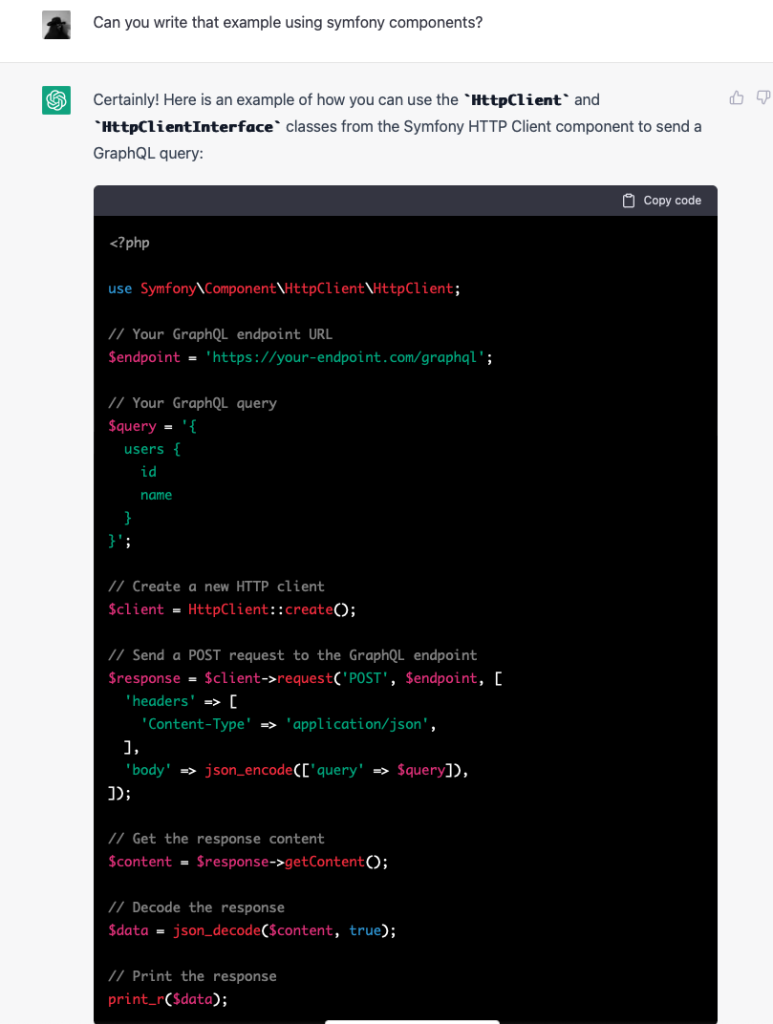

While my fellow software engineers chew that over, take a look at this brief chat and consider how safe your job is from automation:

Pretty good already, and my point is that this is still in the early stages. So if you think your job isn’t at risk from AI… you’re probably wrong.

AI is coming for your job.

Yes, probably even yours.

Adapt

Change is a comin’, but personally I’m really excited.

The more I play with these tools, the more certain I am that there is major opportunity here for those who chose to embrace it. Or, to put it another way, like it our not you better find a way to make yourself hard to automate by creatively using these new tools, and to position yourself properly, because time only moves one way.

- Keep learning: Stay up to date with the latest developments in your field and consider getting additional training or education. This will make you more valuable to your employer and can help you stay ahead of the curve.

- Be flexible: Consider learning new skills that are in high demand and that may not be easily automated, such as leadership, communication, and problem-solving.

- Embrace change: AI and automation are transforming many industries, so be open to new ways of working and be willing to adapt to changes in your role or company.

- Create value: Focus on creating value for your employer and customers, rather than just completing tasks. This can help you stand out and become more valuable to your organization.

- Communicate: Talk to your employer about your concerns and try to understand their perspective on the use of AI in the workplace. This can help you find ways to work together and address any concerns you may have.

The above list was generated entirely by AI, when I asked it “How can I avoid losing my job to AI?”. Not bad at all, even if it does read a little like an ESL listicle you might find on Buzzfeed (bye bye Buzzfeed journalists, I guess). Point two is something I very much agree with – technically aware people skills and leadership (in my industry at least) is something that is going to be hard to automate away, at least until the Singularity (at which point everyone is screwed), and is something that is a high value add.

…but it does present a bit of a problem if you’re only now starting to cut your teeth as an entry level coder. Humans are still going to be necessary… just fewer of them. Reference the self service tills at your local supermarket.

I believe there are some major labour saving advantages for individuals willing to take advantage. I personally have already used chat GPT to produce an initial draft for an article or two, reply to a few awkward emails, write a profile for a dating app, and even come up with a rather tasty curry recipe for leftover chicken.

Could I have done all these myself? Yes. Did I want to? No, and having 80-90% of the job automated away meant I could get on with doing other things. I had to tweak a few things here or there, and maybe rephrase things so they sounded a bit more like me, but the AI almost always produced a workable draft. No more writer’s block.

Questioning Reality

How do you know what is real?

The “Dead Internet Theory” is a hypothetical scenario in which the internet becomes inhabited solely by automated systems or bots, and no human users remain. This could potentially occur if all human users of the internet were to suddenly disappear or become unable to access the internet for some reason. In such a scenario, the internet would continue to function, with bots carrying out tasks and interacting with each other, but there would be no human users to use or appreciate the internet.

I am starting to think this may actually be happening before our eyes.

With more and more of our interactions conducted through screens, and with AI getting more and more sophisticated, we are fast approaching the point where unless you actually experience it yourself in meat space you can never be sure something actually happened.

Yes, even if you see a video of it on the news.

It goes so much further than selectively editing a video to fit a particular narrative, with AI powered deep fakes, you could generate entirely new videos of entirely fake events which look real (predicted decades ago by Running Man).

If it isn’t already happening, I think it is only a matter of time before you start seeing these technologies applied to media, news, and especially interactions on social media. I wonder how much of the Twitter hellscape of the past few years has been caused by nation state actor AIs battling to control narratives? I’m not even going to touch on how big data analysis demonstrates just how predictable the actions of large groups of people are, and how on aggregate free will is pretty much an illusion.

Zoom meeting culture really took off in the pandemic, but with AI and deep fakes becoming more convincing and widespread, I suspect that in the future high level meetings will have to be conducted in person… out of necessity.

As individuals we are all going to have to be far more sophisticated when interacting online, and maintain a healthy level of scepticism around absolutely everything we see or are told is happening. How we do this without tipping into a nihilistic state where absolutely nothing can be relied on as objective truth, I’m not sure.

I guess it will become even more important to turn off the screens, and cultivate those real in person human to human relationships.